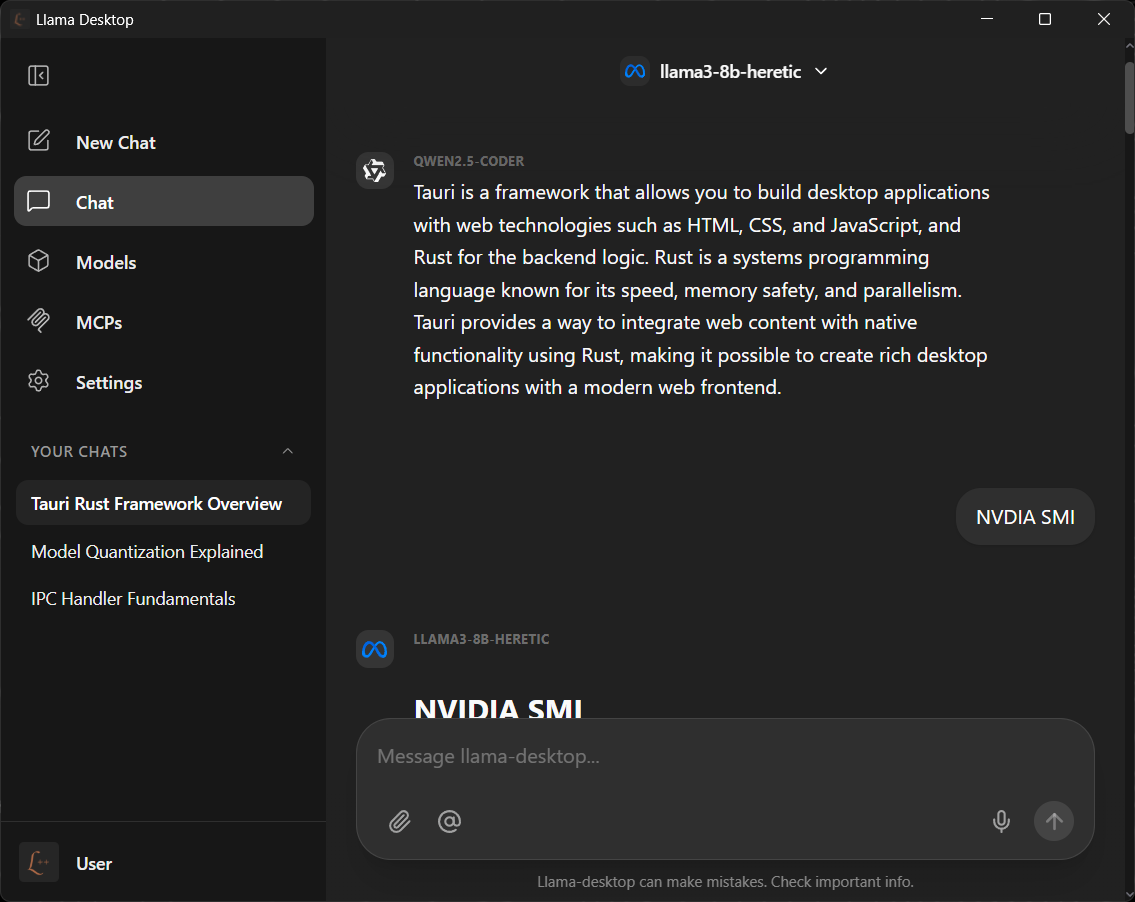

Llama Desktop

About the project

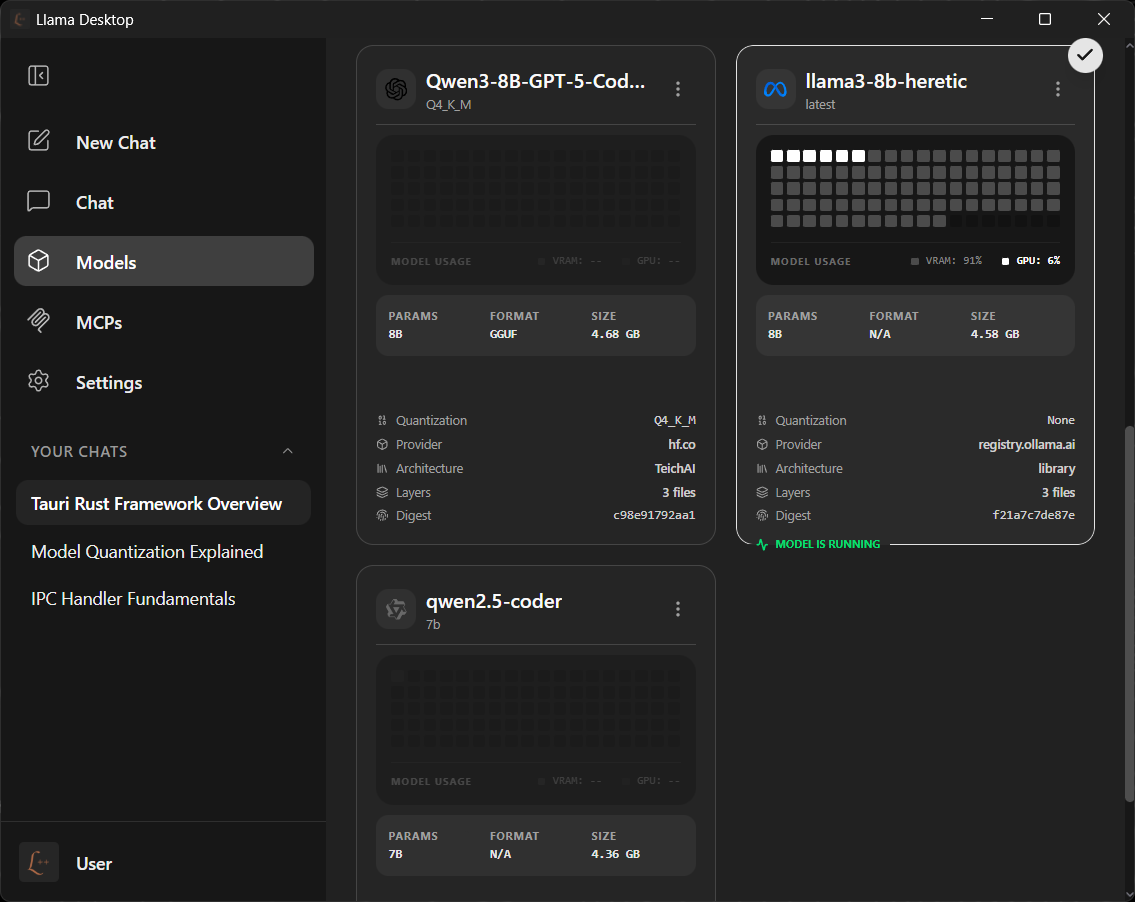

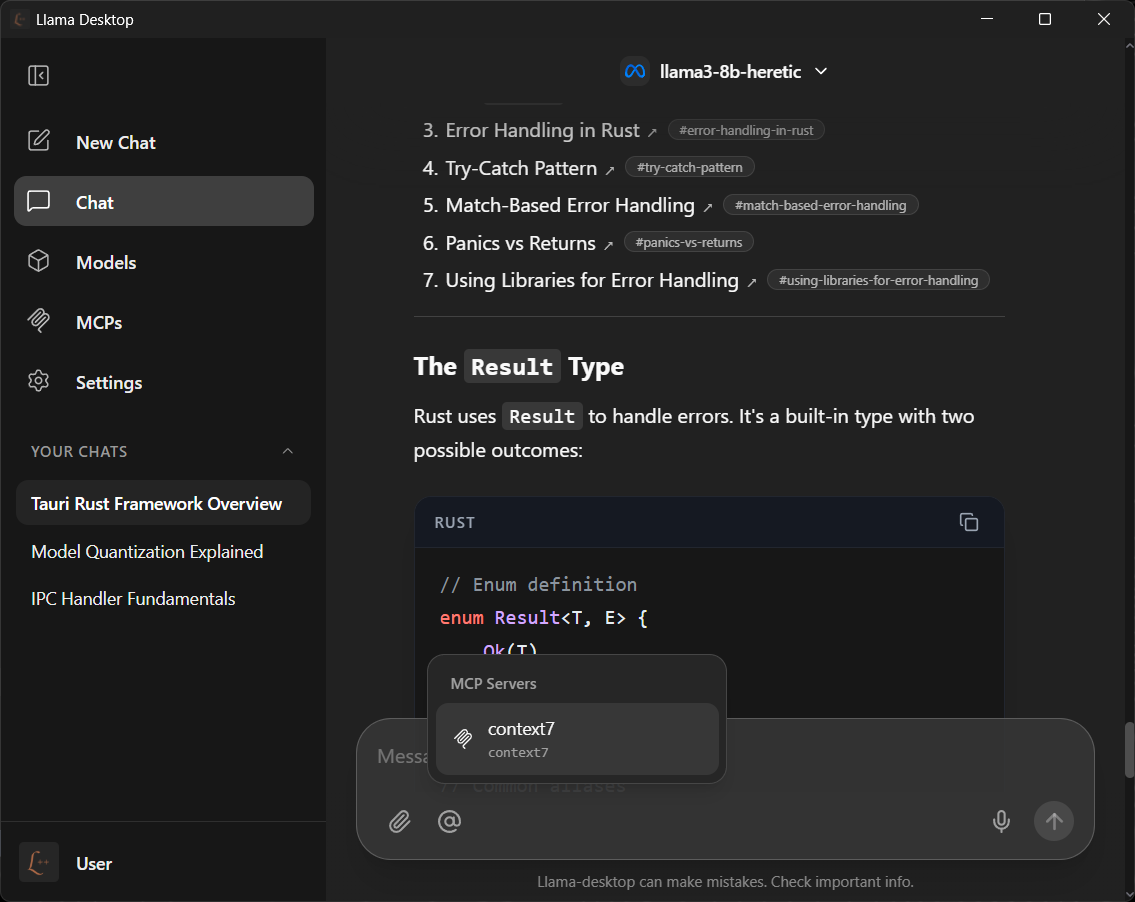

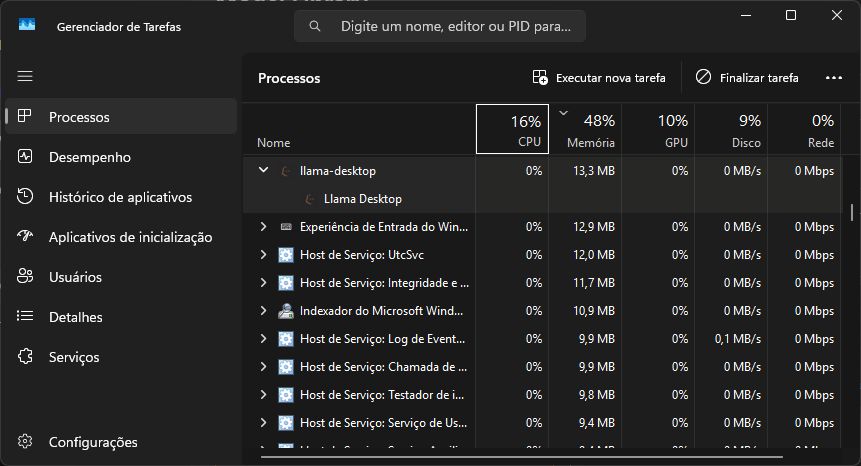

Llama Desktop is a premium, high-performance desktop application designed for local LLM execution. Built with Tauri v2 and Rust, it provides a secure environment to run GGUF models with full hardware acceleration and MCP (Model Context Protocol) extensibility.

The project implements a deep llama.cpp integration, featuring automatic manifest parsing and blob resolution from local Ollama installations. It follows a strict Actor-based Service Architecture, ensuring a robust system where model management and MCP bridges are decoupled and thread-safe.